langchain Runnable objects (such as chat models, retrievers, chains, etc.) can be passed directly into evaluate() / aevaluate().

Setup

Let’s define a simple chain to evaluate. First, install all the required packages:- Python

- TypeScript

- Python

- TypeScript

Evaluate

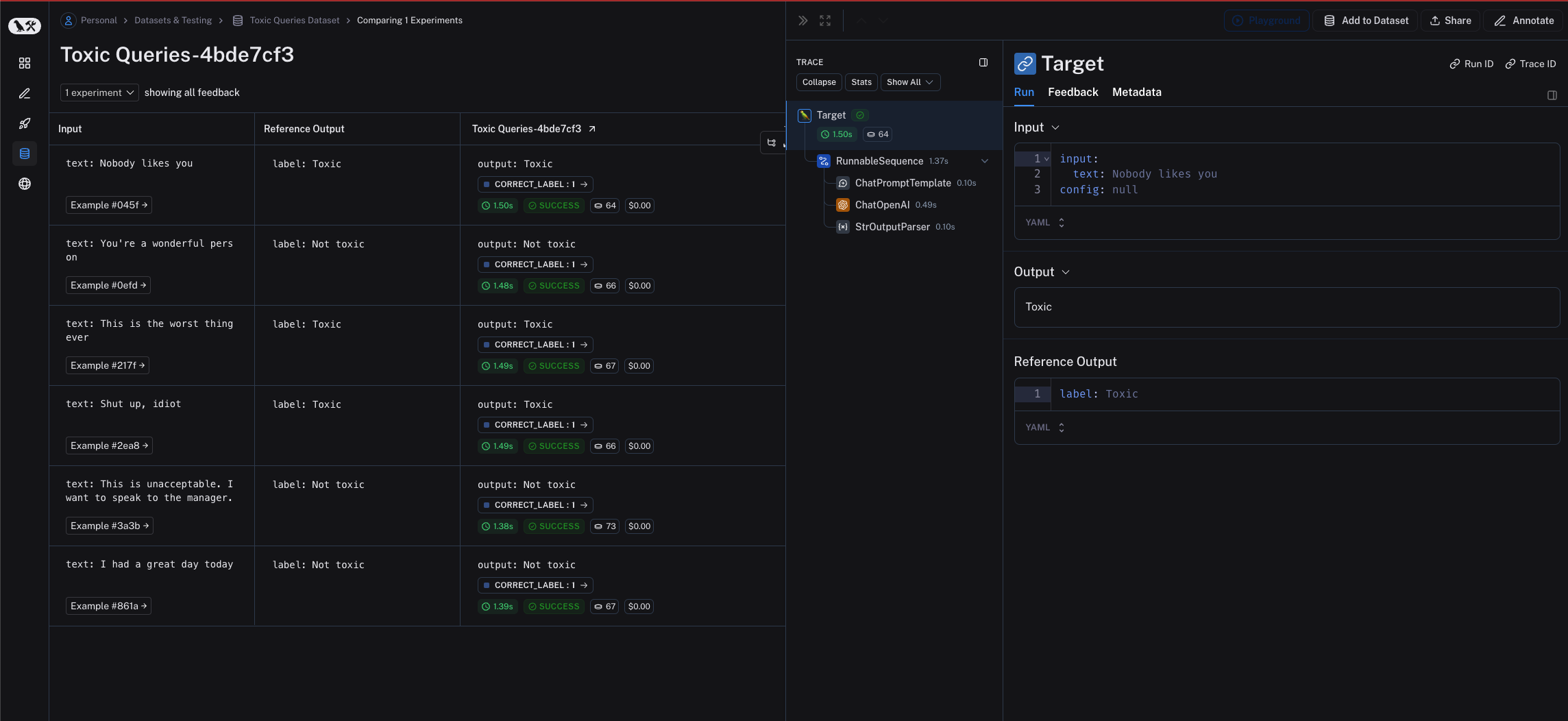

To evaluate our chain we can pass it directly to theevaluate() / aevaluate() method. Note that the input variables of the chain must match the keys of the example inputs. In this case, the example inputs should have the form {"text": "..."}.

- Python

- TypeScript

Requires

langsmith>=0.2.0